Introduction

Project P20_00286 funded by

The research project Automatic detection and grading of prostate cancer from non-expert annotators (Detección y gradación automática de cáncer de próstata a partir de anotadores no expertos) is funded by FEDER/Junta de Andalucía - Consejería de Transformación Económica, Industria, Conocimiento y Universidades from 2021 to 2022.

The Automatic detection and grading of prostate cancer from non-expert annotators research team consists of four doctors from the Visual Information Processing Group (VIP) at Universidad de Granada and four experts pathologist from the Anatomic Pathology Unit from Hospital Universitario Clínico San Cecilio (HUSC) and Hospital Universitario Virgen de las Nieves (HUVN), five undergraduates with extensive experience in the project theme and a doctor from the Northwestern University (Evanston, Illinois, USA).

This page will provide information on the project results and publications.

Summary

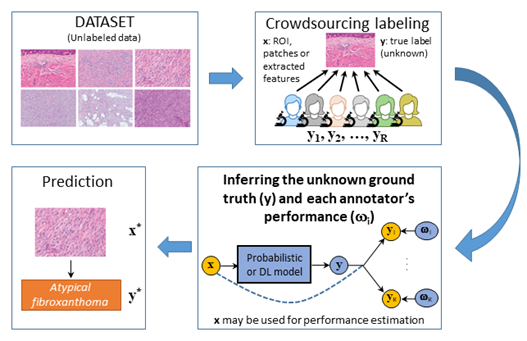

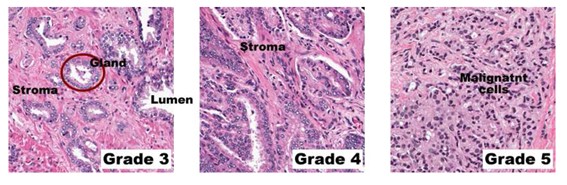

In this project we address the automatic detection and grading of prostate cancer in histological images. Traditionally, this work is performed by an expert pathologist and requires costly hours of observation, research and collaboration. The research we will conduct in this project, by developing crowdsourcing techniques for the detection and grading of prostate cancer in histological images, will provide support to pathologists that will allow them to perform this task more quickly and accurately. The images of the project are mainly provided by the Hospital Clínico San Cecilio and the Hospital Universitario Virgen de las Nieves, worldwide references for being one of the few hospitals that digitizes all the biopsies and whose members are part of the team of this project. There are also images from the Hospital Clínico de Valencia, as a result of collaboration in national and European projects.

The research team has experience in medical image analysis, and specifically in the problem of detection and grading of prostate cancer. In the national project "Histopathological image interpretation system for prostate cancer detection" (SICAP) we have developed color enhancement and deconvolution methods. Here we intend to apply these techniques and develop new ones, to eliminate blurring and standardize the images provided by the different hospitals. On the other hand, in SICAP we also developed machine learning methods for prostate cancer detection and grading from a reduced set of labeled training images. While good results have been obtained for detection, they are insufficient for grading. The small number of labeled images is the main cause of these results. Unfortunately, creating a large base of images annotated by the same expert pathologist is impossible, both because of the time available and the large number of images needed. To mitigate this problem, crowdsourcing methods have been proposed in recent years, where labeling is performed by a multitude of annotators with varying degrees of expertise. This process adds noise in the annotation process that needs to be eliminated.

A large database of images annotated by non-expert pathologists will be created. It will be jointly investigated, using analytical and deep learning techniques, how to efficiently preprocess and standardize histological WSIs and how to find the best model using these noisy annotated images to diagnose, through different crowdsourcing strategies, with the quality of an expert pathologist. This will facilitate and accelerate prostate cancer detection and grading, improve the training of pathologists facing this complex task for the first time, and support more experienced pathologists with limited experience in prostate cancer grading.

The problem of annotator goodness-of-fit analysis will be also addressed in the project. So far, most crowdsourcing methods proposed in the literature model annotator behavior independently of the extracted image or features. However, this assumption is not very realistic, because annotators may be more expert in one type of images than in others. In this project we will develop models based on Machine Learning and Deep Learning techniques that more realistically model this behavior. Additionally, different annotator profiles will be evaluated. To this end, part of the image database will be annotated by pathologists not specialized in prostate cancer, and other inexperienced annotators, who will provide greater variability to the annotations.

Finally, we would like to emphasize that the proposed annotation tool, enhancement, normalization, crowdsourcing and histological image classification methods will be patentable, transferable to industry and easily adaptable to other image analysis problems.

Objectives

Tthe goal of this project is to develop a robust artificial intelligence system for prostate cancer detection and grading using WSI. This project will jointly investigate how to efficiently standardize histological WSIs and how to find the best model using these images to diagnose through different crowdsourcing strategies. This will facilitate and accelerate the recognition and grading of prostate cancer, improve the training of pathologists facing this complex grading for the first time and support more experienced but time-limited pathologists in prostate cancer grading.

This general objective is divided into the following specific objectives:

SO1. Build an annotated set of diagnosed and graded prostate cancer WSI images to train and validate the algorithms. The dataset will consist of WSI images provided by Hospital Universitario San Cecilio (HUSC), Hospital Universitario Virgen de las Nieves (HUVN) in Granada and Hospital Clínico de Valencia. Regions of interest in the images will be manually marked by expert pathologists and annotated for training and validation purposes by non-expert pathologists, who will provide a marked corpus to train the crowdsourcing algorithms. The currently available images, which have been annotated by expert pathologists, will provide the actual output of the system that will serve to validate and test the algorithms. Additionally, a portion of the image base will be annotated by non-expert prostate cancer pathologists and other inexperienced annotators, which will allow us to evaluate the robustness of our method to different annotator profiles.

SO2. Develop methods for color enhancement and normalization in WSI. These methods will detect blurred areas, a common artifact that affects a large proportion of WSI and hinders the analysis process, and, where possible, remove the blurring using deconvolution techniques. Color normalization will make the system robust to inter- and intra-hospital variations by obtaining images with the same appearance regardless of scanner, dye manufacturer, or initial state of the WSIs. The developed techniques will help to extract better discriminative features, and increase the performance of machine learning methods.

SO3. Develop crowdsourcing methods for prostate cancer identification and grading based on the dataset annotated by non-expert pathologists. The developed methods will use the regions of interest extracted in SO1 to extract features and jointly estimate the underlying classifier as well as parameters characterizing the expertise of each annotator. Linking the behavior of each annotator to the actual underlying classifier is of enormous interest in the training of non-expert pathologists, and thus will be an additional result to highlight from the developed methods. Additionally, semi-supervised crowdsourcing methods will be explored that allow us to take advantage of the large amount of unlabeled data generated in the project to improve classifier performance.

SO4. Validate the developed algorithms through qualitative and quantitative methods using benchmark WSIs. Using the database of WSIs of objective SO1, we will proceed to validate the results obtained by applying the methods of objectives SO2 and SO3, comparing their results with those of other state-of-the-art methods. The validation will use qualitative and quantitative measures. The influence of the inclusion of annotations from non-prostate cancer pathologists and inexperienced annotators on the robustness of the developed methods will be studied. In this objective, the best combinations of WSI image enhancement and normalization and crowdsourcing methods will be selected to achieve better diagnosis.